How the UK fell out of love with an AI bill started with big hopes for strong rules on powerful AI systems. Lawmakers pushed for laws to handle risks from advanced tech, but plans hit roadblocks and faded away.

Key Summary

- UK leaders first promised an AI safety bill after a global summit in 2023.

- Plans for frontier AI risks rules stalled under budget cuts and new priorities.

- Government dropped AI law focus, choosing flexible guidelines over hard laws.

- Tech industry reaction mixed, with some cheering freedom and others wanting clear rules.

- This policy U-turn AI style move aligns with a pro-growth approach.

- Future UK AI regulation may come sector by sector, not all at once.

How the UK fell out of love with an AI bill: The Early Promise

Excitement built fast after the 2023 AI Safety Summit at Bletchley Park. World leaders, including then-Prime Minister Rishi Sunak, talked about dangers from super-smart AI models. The UK seemed ready to lead with a dedicated AI bill to set global standards.

Lawmakers in the House of Lords tabled the Artificial Intelligence (Regulation) Bill in 2023. It aimed to create an AI authority, demand transparency from developers, and protect users from harms. UK parliament AI debates highlighted needs like public input on high-risk systems.

But progress slowed as the general election loomed in 2024. Parliament dissolved, and the bill died without becoming law.

Shift Under New Leadership

When Labour took power in July 2024, Prime Minister Keir Starmer promised a fresh look at tech rules. Many expected a new AI legislation delay to end with bold action. Yet, the King’s Speech skipped any mention of a full AI law.

Instead, the government focused on sector-specific steps, like data protection tweaks. This matched their view that heavy rules could slow UK growth in a fast AI race.

Key Reasons for the Policy U-turn AI

Budgets played a big role. The new government canceled £1.3 billion in AI projects, like supercomputers, to fix spending holes left by the past team. Government dropped AI law efforts to save cash and pick winners carefully.

Artificial intelligence rules debates also split opinions. Some feared over-regulation would push AI firms to places like the US, where rules stay light. Starmer stressed seizing tech chances over tight controls, as seen in talks with US President Donald Trump.

Industry voices added pressure. Tech groups wanted flexibility to innovate on frontier AI risks, while creators pushed for copyright fixes in AI training. Efforts like a voluntary code failed to unite everyone.

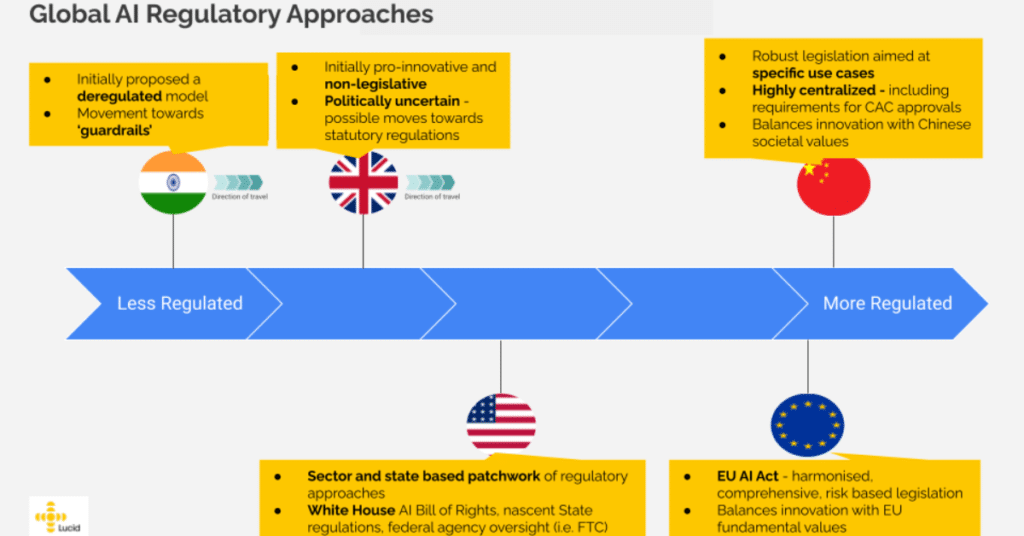

This table shows the clear pivot from strict laws to a lighter touch.

Tech Industry Reaction and User Impacts

Businesses welcomed the change. Firms building AI tools saw less red tape as a win, letting them scale without constant legal checks. For developers, this means easier work on new models without mandatory reporting.

But not everyone cheered. Safety experts worry about gaps in handling biases or errors in AI decisions. Users might face more risks in areas like hiring or loans if automated systems lack oversight. Everyday people could see uneven protections across apps.

Link to real-world effects: Check how AI memory challenges in business tie into broader regulation needs.

UK AI regulation watchers point to the EU’s AI Act as a contrast. There, strict tiers ban high-risk uses outright. The UK bets on principles-based guidance, updated as tech evolves. For more on global views, see UK Parliament records.

What Dropped Plans Mean for AI Future

The bill’s fade opens doors for targeted fixes. Recent Data (Use and Access) Bill talks touched AI in decisions, but skipped big copyright clauses. Government now eyes reports on AI training data use by year’s end.

For users, this means watching sector rules closely. Health AI might get tight checks, while chatbots stay loose. Developers gain room to experiment, boosting UK as an AI hub.

AI legislation delay could return if scandals hit, like deepfake harms. Until then, voluntary codes and watchdogs fill the gap. Explore BBC News for live updates on policy shifts.

Businesses should prep for flexible rules. Build in safety features now to stay ahead. Safety stays key amid frontier AI risks.

Lessons and Next Steps

This story shows how politics, money, and tech speed shape laws. The UK chose growth over speed on rules, betting it pays off long-term. Users win with faster tools but must push for balance.

AI safety bill dreams paused, but UK parliament AI talks continue. Stay informed via The Guardian. For related reads, dive into AI regulation trends or future AI laws.

What does this mean for you? More AI in daily life with fewer hurdles, but call for smart safeguards. The UK keeps innovating, eyes wide open.