ZAYA1 AI model has officially reached a major breakthrough, marking one of the most impressive milestone achievements in next-gen artificial intelligence this year. The model’s rapid progress is driven by its powerful integration of AMD GPU AI training, setting a new benchmark in high-performance AI compute and scalable model development.

Key Highlights

- Achieves record training speed using AMD Instinct accelerators

- Raises standards for advanced AI model development

- Demonstrates major improvements in generative AI training efficiency

- Signals a strong future for AI hardware acceleration

- Supports emerging next-gen AI systems at scale

ZAYA1 Milestone: What Makes This Breakthrough Important?

The ZAYA1 milestone achievement is more than just a performance update—it’s a reflection of how fast the industry is evolving. By leveraging AMD Instinct accelerators, the model reduces training time while expanding compute capability, showcasing what modern scalable AI infrastructure can accomplish.

This progress comes at a time when tech giants are pushing boundaries in image generation and compute innovation. For example, recent advancements like Google’s Nano Banana Pro show how rapidly generative AI systems are evolving.

You can read about that advancement in this detailed guide on Google’s powerful image-generation breakthrough.

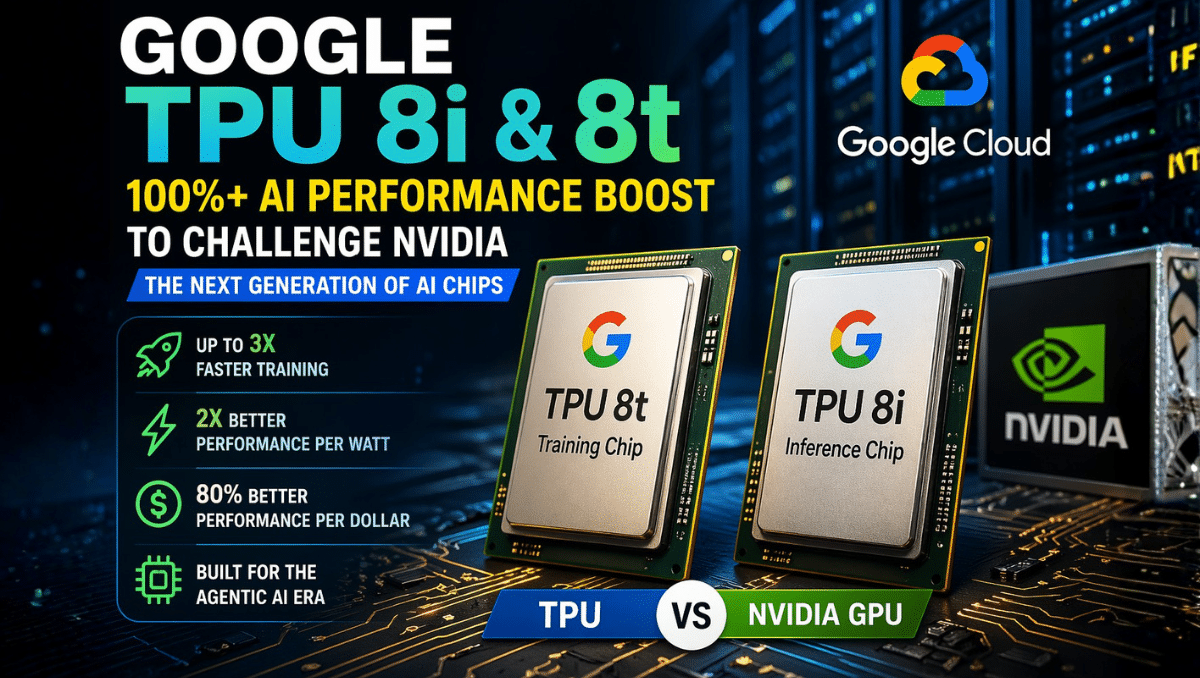

AMD GPUs: A New Engine for AI Hardware Acceleration

One of the biggest reasons behind ZAYA1’s success is the shift toward AI hardware acceleration using AMD’s high-density compute architecture. Unlike traditional systems, AMD GPUs are optimized for:

- Parallel processing

- Lower energy consumption

- Faster tensor operations

- Higher memory throughput

This makes them ideal for advanced AI model development, especially for models that require billions of parameters and continuous training cycles.

As the demand for next-gen AI systems grows, companies are adopting heterogeneous compute stacks—mixing AMD, NVIDIA, and custom accelerators—to unlock new levels of performance.

Generative AI Training Efficiency: Where ZAYA1 Stands Out

The generative AI training efficiency achieved here is particularly notable. ZAYA1’s architecture is designed for:

- Faster iteration cycles

- Reduced latency

- Low-cost scalability

- Real-time fine-tuning

This positions it strongly in a competitive ecosystem where speed and efficiency drive market leadership.

The broader AI world already sees a huge wave of tool integration, such as the rapid expansion of app-based AI workflows. To understand this trend, you can check this resource explaining modern AI integration techniques

Its placement here provides context to how models like ZAYA1 fit into the larger AI applications landscape.

Scaling Toward the Future of AI Innovation

The momentum behind ZAYA1 reflects an industry-wide shift toward AI innovation in hardware, where optimized architectures significantly reduce development friction. With scalable AI infrastructure becoming essential for enterprise-level deployment, models like ZAYA1 indicate the direction future training systems are headed.

In the coming months, industry experts expect more organizations to transition toward hybrid training environments—combining AMD GPUs with cloud-native orchestration and edge compute—to enhance efficiency and reduce operational costs.

Conclusion

The latest milestone achieved by the ZAYA1 AI model is a clear signal that the AI hardware race is accelerating. Backed by AMD GPU AI training and enhanced by AI hardware acceleration, ZAYA1 demonstrates how innovation in compute power is transforming the landscape of next-gen AI systems.

As the model continues to evolve, its contribution toward high-performance AI compute and efficient scaling will play a crucial role in shaping the future of the industry.