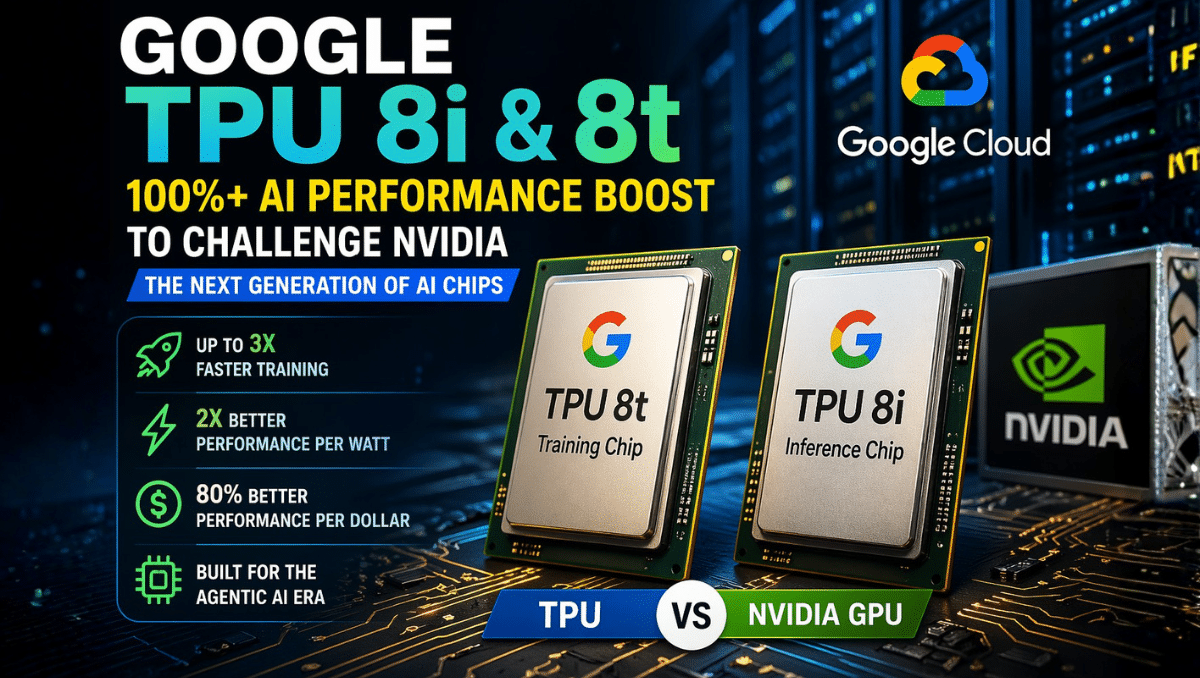

Google has introduced its latest 8th-generation Tensor Processing Units (TPUs) the TPU 8i and 8t—marking a major leap in AI hardware. These new chips deliver over 100% performance improvement in real-world workloads, positioning Google as a serious challenger to Nvidia in the AI infrastructure space. As demand for faster and more efficient AI systems grows, the competition between TPUs and GPUs is becoming central to the future of artificial intelligence.

These chips are not just incremental upgrades they signal a major shift toward specialized AI hardware, directly challenging Nvidia’s dominance in GPUs.

What Are Google TPU 8i and 8t?

Google’s TPU 8i and 8t are custom-built AI chips (ASICs) designed specifically for machine learning tasks. Unlike CPUs or GPUs, TPUs are optimized for tensor operations, which are the backbone of deep learning models.

In this generation, Google has split its architecture into two specialized chips:

TPU 8t (Training-Focused)

- Built for training massive AI models (LLMs, multimodal systems)

- Scales across thousands of chips for distributed computing

- Delivers up to 3× performance improvement over previous TPUs

- Optimized for heavy matrix computations

TPU 8i (Inference-Focused)

- Designed for real-time AI applications

- Focuses on low latency and cost efficiency

- Offers around 2× better performance-per-watt

- Ideal for chatbots, search, recommendations, and AI agents

The key innovation is specialization. Instead of using one chip for everything, Google separates training and inference workloads, improving efficiency, scalability, and cost performance.

TPU vs GPU: Key Differences

The competition between TPUs and GPUs defines the current AI hardware landscape, especially with Nvidia leading the GPU ecosystem.

1. Architecture Design

- TPU: Purpose-built ASIC for AI tensor operations

- GPU: Originally built for graphics, later adapted for AI

TPUs are specialized for AI, while GPUs are more general-purpose.

2. Performance Optimization

- TPU: Highly optimized for deep learning tasks

- GPU: Flexible but less specialized

This allows TPUs to achieve higher efficiency in targeted AI workloads.

3. Training vs Inference Efficiency

- TPU 8t excels in training

- TPU 8i excels in inference

- GPUs handle both but without hardware-level specialization

This separation improves performance and cost efficiency at scale.

4. Power Consumption (Performance per Watt)

- TPU: Better performance-per-watt

- GPU: Higher power usage for similar workloads

Energy efficiency is a critical advantage for large data centers.

5. Ecosystem and Flexibility

- GPU (Nvidia):

- Strong ecosystem (CUDA, libraries, tools)

- Widely adopted

- TPU (Google):

- Available via Google Cloud

- Deep integration with Google’s AI stack

GPUs offer flexibility, while TPUs offer optimized performance within a controlled ecosystem.

6. Cost Efficiency

- TPU: Lower cost per AI operation (especially inference)

- GPU: Higher cost but broader usability

For large-scale AI deployments, TPUs can significantly reduce operational costs.

Why This 100%+ Performance Jump Matters

The “100%+ performance improvement” comes from a combination of:

- Up to 3× faster training performance (TPU 8t)

- Around 2× better efficiency in inference (TPU 8i)

- Improved memory bandwidth using advanced architectures

- Better performance-per-watt and performance-per-dollar

Together, these advancements dramatically reduce the cost and time required to train and run AI systems.

Google vs Nvidia: Strategic Shift

Nvidia continues to dominate with GPUs, but Google is taking a different approach:

- Nvidia focuses on general-purpose AI hardware

- Google focuses on task-specific AI chips + full ecosystem integration

Google combines:

- TPUs (hardware)

- AI models

- Cloud infrastructure

This vertical integration allows deeper optimization compared to standalone GPU solutions.

Industry Impact

- Reduced dependency on Nvidia GPUs

- Rise of custom AI chips across big tech companies

- Lower AI infrastructure costs

- Faster adoption of AI applications and agents

The market is shifting from a GPU-only approach to a multi-hardware ecosystem.

Conclusion

Google TPU 8i and 8t represent a major step forward in AI hardware:

- TPU 8t accelerates large-scale AI training

- TPU 8i enables efficient real-time AI applications

- Combined, they deliver over 100% improvement in AI performance and efficiency

This marks the beginning of a new phase in AI infrastructure one where specialized chips compete alongside GPUs, driving innovation, lowering costs, and expanding what AI can achieve.