AI privacy is becoming one of the biggest pressure points in the digital world as governments, tech giants, and users wake up to how much personal data modern AI systems actually consume. From recommendation engines to generative chatbots, almost every AI model today touches personal data AI in some way, raising fresh questions about who controls that information and how safely it is stored.

- Online privacy risks are rising as AI tools collect and infer sensitive details from user activity.

- New AI privacy laws 2025 and data protection AI regulations aim to put legal boundaries around what companies can do with user data.

- Emerging guardrails, from India’s DPDP Act AI to the EU’s evolving AI and data laws, are forcing businesses to rethink their AI strategies.

- Users are increasingly worried about chatbot privacy risks, browser AI assistants privacy, and deepfake privacy threats in everyday apps.

At the heart of this shift is a simple question: can AI deliver value without turning into a surveillance engine? Regulators, researchers, and industry leaders now see AI data protection not as an add‑on but as a core requirement for trust, innovation, and long‑term adoption.

Why AI privacy matters right now

Over the past two years, consumer AI tools have moved from niche experiments to everyday utilities embedded in browsers, messaging apps, productivity suites, and enterprise workflows. This rapid integration has amplified online privacy risks, because AI models can log queries, infer identities, and reconstruct sensitive profiles from seemingly harmless interactions. At the same time, high‑profile leaks and AI cyber-espionage threats have shown that poorly governed AI pipelines can expose user data AI security gaps across the stack.

For businesses, this is no longer just a compliance checkbox. Weak AI privacy practices now create real business risk: regulatory fines, reputational damage, loss of customer trust, and higher barriers to entering regulated sectors like finance, healthcare, and education. For policymakers, AI has become the new frontline for privacy regulations AI, prompting a wave of fresh rules that directly target data‑hungry models and platforms.

The new wave of data protection AI regulations

Around the world, legislators are tightening data protection AI regulations to ensure that AI systems respect existing privacy principles like consent, purpose limitation, and data minimisation. In practice, this means companies must clearly explain what data is collected, how it is used to train or fine‑tune models, and how long it is retained. Many regulatory frameworks now expect AI developers to adopt privacy‑by‑design from the earliest stages of system architecture.

New and upcoming AI privacy laws 2025 also push organisations to conduct impact assessments before deploying high‑risk AI systems. These assessments must examine potential harms to individual rights, discrimination risks, and the possibility of unintended data reuse across models. For AI teams, this translates into more documentation, stricter access controls, and tighter alignment between legal, security, and engineering teams.

DPDP Act AI: India’s emerging AI privacy model

India’s DPDP Act AI approach is especially important for global companies operating in large, fast‑growing digital markets. The law aims to give users greater control over personal data AI handling, including rights to consent, withdraw consent, and seek redress for misuse. For AI builders, this means they must map where Indian user data flows inside their models and logs, not just in traditional databases.

Future rules and guidelines under the DPDP regime are expected to touch on algorithmic transparency, data localisation, and cross‑border data transfers. That will directly shape how global AI platforms architect their infrastructure, from training pipelines to inference endpoints hosted in local or sovereign clouds. Organisations that already invest in strong AI data protection controls will find it much easier to adapt as detailed rules converge with global standards.

Browser AI assistants and invisible tracking

One of the fastest‑growing concerns involves browser AI assistants privacy. These tools sit directly inside the browser, watching pages you read, text you type, and sometimes even content in private dashboards or internal tools. Without strict guardrails, they can inadvertently capture sensitive corporate information, credentials, or personal records.

For end users, it is often unclear which data is processed locally and which is sent to cloud models. That opacity makes online privacy risks feel more acute, especially when AI summaries and recommendations are powered by continuous tracking of browsing habits. Privacy‑first implementations that run models on‑device, limit logging, and clearly show when data leaves the machine will become key differentiators in the next generation of AI browsers.

Chatbot privacy risks in everyday conversations

Generative AI chatbots have turned into default interfaces for search, support, and productivity, but they also introduce new chatbot privacy risks. Conversations can contain passwords, health details, financial data, or confidential business plans. If these logs are retained and reused for training, they may resurface indirectly in other responses or be exposed through security incidents.

To address user data AI security concerns, responsible AI providers are building clear separation between customer data and model training pipelines. Some vendors now offer “no training” modes, enterprise‑only instances, or private cloud deployments that keep chat logs inside a company’s own environment. For high‑risk sectors, such privacy‑preserving deployment models will increasingly be non‑negotiable.

Deepfake privacy threats and identity misuse

The rise of synthetic media has turned deepfake privacy threats into a mainstream issue. AI‑generated videos, voices, and images can be used to impersonate individuals, fabricate consent, or spread disinformation tied directly to personal identity. This is not only a security problem; it is a direct assault on AI privacy, because the boundary between genuine and synthetic personal data becomes blurred.

Regulators are now exploring watermarking, disclosure labels, and liability rules for platforms that host or distribute deepfake content. For individuals, the stakes are high: reputational harm, emotional distress, and loss of control over one’s digital likeness. Stronger privacy regulations AI combined with robust identity verification and detection tools are critical to curbing these abuses.

How cloud infrastructure and private AI can help

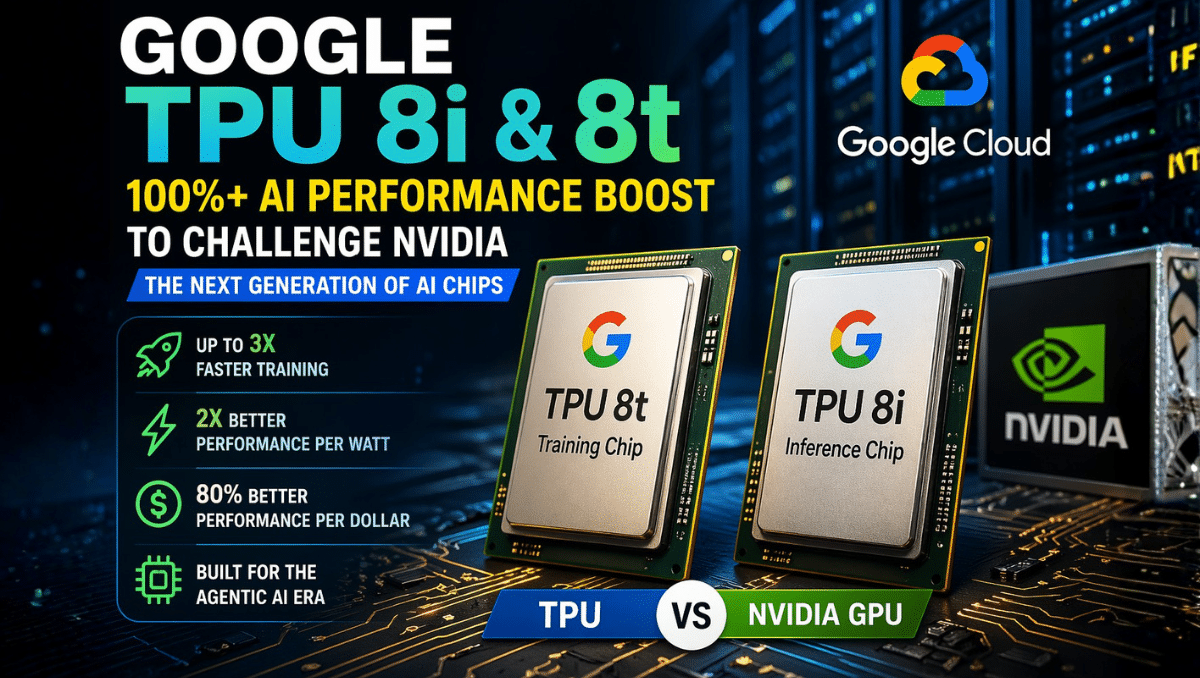

On the infrastructure side, privacy‑centric architectures are gaining momentum as organisations seek to combine powerful models with stricter controls on personal data AI. Google’s private AI cloud and TPU security and secure accelerators are being used to isolate sensitive workloads while still benefiting from advanced models. These trends align closely with efforts to harden user data AI security across the entire AI stack.

Industry moves such as hardened private AI clouds and secure TPUs show how infrastructure design can support AI data protection by default. When combined with encryption‑in‑use, granular access policies, and strong logging, they make it significantly harder for attackers—or insiders—to exfiltrate sensitive training data or inference logs from production systems.

What organisations should do next

For organisations building or deploying AI, the message is clear: AI privacy strategy cannot be an afterthought. Teams need clear data maps, retention policies, privacy‑aware training pipelines, and continuous monitoring of online privacy risks across models and applications. Governance frameworks should embed legal, security, and product teams into one loop.

Practical steps include conducting regular AI privacy impact assessments, limiting data collection to what is truly necessary, and giving users transparent choices about how their data is used. Investing early in compliance with data protection AI regulations and upcoming AI privacy laws 2025 will not only reduce regulatory risk but also build long‑term trust with customers and partners who are increasingly aware of how powerful—and invasive—modern AI systems can be.