In a significant move amid evolving AI regulations, the UK government is exploring mandatory labeling for AI-generated content. This proposal aims to shield consumers from deepfakes and disinformation while fostering AI growth.

Key Highlights

- Mandatory Labeling Proposal: UK government consulting on visible and metadata labels for AI text, images, audio, and deepfakes to boost transparency.

- Post-Copyright Rethink: After dropping broad AI training exemptions due to artists’ backlash, focus shifts to labeling and replicas.

- Technical Standards: C2PA for resilient watermarks; platforms must detect and remove non-compliant content swiftly.

- Business Impact: SEO pros, creators face workflow changes; fines loom for unlabeled synthetic media by potential 2027 rollout.

- Global Alignment: Mirrors EU AI Act but lighter-touch; influences India, US deepfake laws.

- Compliance Prep: Audit tools, adopt credentials like Adobe’s; monitor ongoing consultations.

Rising Need for AI Content Labeling

The rapid proliferation of generative AI tools has flooded digital spaces with synthetic text, images, and videos. Consumers often struggle to distinguish real from AI-created material, heightening risks of misinformation.

Deepfakes pose threats to elections, personal privacy, and public trust. The UK, like the EU with its AI Act, seeks watermarking and visible labels to ensure detectability.

This aligns with global LSI keywords like AI watermarking standards, synthetic media transparency, and generative AI disclosure rules.

UK Government’s Latest Consultation Updates

As of March 2026, the UK Intellectual Property Office released reports dropping prior copyright preferences for AI training data. Instead, it launches fresh consultations on labeling AI-generated content, digital replicas, and licensing.

Technology Minister Liz Kendall emphasized balancing creative industries’ rights with AI innovation. No immediate laws, but labeling emerges as a priority to combat disinformation without stifling R&D.

The consultation, following 2024-2025 feedback, highlights “substantial uncertainty” in copyright reforms. Industry welcomes the rethink, especially after the government

How Labeling Would Work in Practice

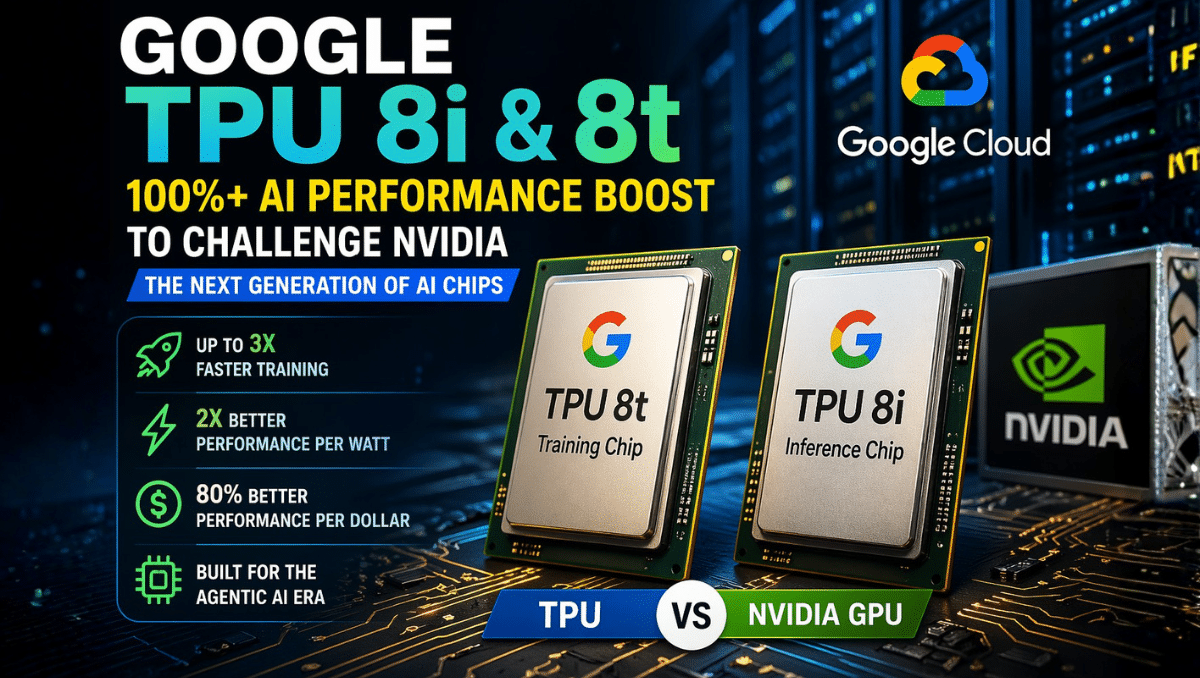

Proposed rules mirror EU AI Act Article 50, requiring machine-readable metadata in AI outputs. Visible disclosures must flag synthetic audio, images, text, and deepfakes.

Technical standards like C2PA (Coalition for Content Provenance and Authenticity) embed provenance data resilient to edits. Providers ensure detectability; deployers disclose usage.

For businesses, this means updating AI tools for compliance by potential 2027 deadlines. Platforms face takedown obligations within hours for non-compliant content.

Comparison: UK vs EU AI Labeling Approaches

| Aspect | UK Proposal (2026) | EU AI Act (Article 50) |

|---|---|---|

| Scope | Text, images, audio, deepfakes | Synthetic audio, image, video, text |

| Labeling Method | Visible + machine-readable (under consult) | Mandatory metadata + disclosure |

| Timeline | Consultations ongoing, no firm date | Applies Aug 2026 (possible delay to 2027) |

| Enforcement | Government oversight TBD | Fines up to 6% global turnover |

| Focus | Consumer protection, no innovation barrier | Transparency for high-risk generative AI |

This table highlights UK’s flexible stance versus EU’s stricter mandates.

Implications for Businesses and Creators

SEO experts and content creators must integrate AI disclosure in workflows. Unlabeled synthetic content risks penalties, SEO demotions, or consumer distrust.

Artists, vocal in outcries, gain leverage against unauthorized replicas. Yet, voluntary codes precede mandates, allowing adaptation.

For India’s digital marketers eyeing UK markets, align with these via tools like Adobe Firefly’s content credentials.

Technical Solutions: Watermarking and Detection

Watermarking embeds invisible markers surviving compression. Robust methods use AI-specific hashes or steganography.

Challenges include removal by cropping or AI re-edits. Interoperable standards ensure cross-platform reliability.

See EU’s Code of Practice on AI-generated content for emerging best practices.

Broader Global Context and LSI Trends

US states like California mandate deepfake labels; China requires watermarks. LSI terms like “AI transparency obligations,” “synthetic content provenance,” and “deepfake detection tech” dominate searches.

UK’s approach influences Commonwealth nations, including India’s budding AI policies. Track UK AI copyright reforms for updates.

Challenges and Criticisms

Critics argue mandatory labels stigmatize AI, hindering adoption. Technical feasibility varies—text watermarking lags images.

Small developers face compliance burdens. Government pledges “light-touch” regulation to spur £50B AI economy by 2030.

Privacy concerns arise with metadata tracking origins.

Steps for Compliance Preparation

- Audit AI tools for labeling features.

- Implement C2PA-compliant workflows.

- Train teams on disclosure ethics.

- Monitor UK government AI consultations.

Future Outlook: What Lies Ahead

Expect legislation by late 2026 post-consultations. Integration with Data (Use and Access) Act 2025 shapes outcomes.

This fosters ethical AI, protecting users while enabling innovation. Stay ahead with LSI-focused monitoring: AI output transparency, UK gen AI rules, content authenticity mandates.

The UK May Require AI-Generated Content to be Labeled: Balancing Innovation and Transparency

Conclusion

The push for AI-generated content labeling in the UK reflects a broader global shift: AI is no longer just a technological issue—it’s a societal one.

Requiring labels may soon become essential to:

- Preserve trust in digital content

- Protect human creators

- Ensure responsible AI development

The real challenge lies in getting the balance right—regulating enough to protect society, but not so much that innovation is stifled.